Click here for a version of this article in portuguese

The use of compression methods in data transmission has always been among the objectives of application and service offerings which aimed at reducing network bandwidth consumption and data transmission time.

Today, most applications and services offered on the Internet already use efficient compression methods, a trend that should get more usual as more services and applications are migrated to the cloud, once most service contract models from Amazon Web Services or Microsoft Azure, which are based, among other things, on the amount of data transmitted.

At the same time, the increasing and evolving threats to data security and the emergence of strict regulations to protect data, such as LGPD in Brazil and GDPR in Europe, make data encryption as important or more important than data compression.

However, many applications compress data before it is encrypted, which, in some cases, may compromise the confidentiality of the transmitted data.

This article introduces some of the security issues that surround data compression with later encryption and demonstrates that, in certain cases, it is safer to only encrypt it. This will be based on the operation of two popular compression algorithms that make up Deflate (one of the most commonly used compression methods used by gzip).

Compression Algorithms

Generally speaking, a compression algorithm has as its main objective the reduction of space required to store the same amount of information. Deflate, for example, is subdivided into two other algorithms, LZ77 and Huffman Coding:

● LZ77: Algorithm responsible for reducing the redundancy of data to be compressed. These redundancies are replaced by references indicating the position where the same sequence of symbols previously appeared, which makes it possible to reduce the original size of the data.

● Huffman Coding: The result of LZ77 is used as input to Huffman Coding. This algorithm uses variable length codes to represent text symbols. The code size is not identical for all symbols. Each code is generated through a binary tree that is constructed based on a logic in which lower frequency symbols will be left farther from the root (thus generating larger bit string codes) and higher frequency symbols can be found closer to the root, generating code with smaller bit strings.

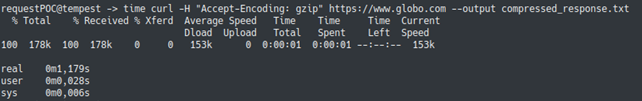

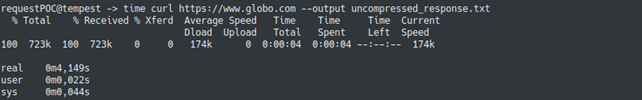

In an HTTP request, the header field, Accept-Encoding, allows the client to explicitly use some coding in the communication (usually the compression itself). Below are the results of a test in which we compared the size and response time of two requests, one that uses compression and one that doesn’t use it. As the tests were performed in the same environment, with requests to the same website, it can be concluded that the difference in response times is a result of data compression (and not of some external factor), proving its effectiveness.

Compression-Encryption Combinations

As mentioned earlier, despite the importance of compression for reducing the size of trafficked data and, consequently, for reducing costs and improving performance, it is of utmost importance to also consider that trafficked data is safe. Currently, two scenarios for the use of data compression and encryption are considered, namely:

Compress after encryption: An option that is not considered efficient, since, as we saw in the explanation of the Deflate compression algorithm, in order to achieve a high compression ratio, the data to be compressed must have redundancies and repetitions, which does not occur when data is encrypted.

Compress before encrypting: Option that is used in most cases. As shown earlier, there is a significant reduction in data transmission time and bandwidth consumption when services use compression.

Although the option of compressing before encryption is the most used, it is susceptible to attacks that may compromise data confidentiality, as we will see below.

Attacks on data encrypted after compressed

One of the possible attacks to get data that was compressed before it was encrypted involves the use of JavaScript code to perform a brute force attack on the victim’s browser. This attack allows a threat agent to infer encrypted transmitted data based on the size of that compressed data. This is possible due to an intrinsic vulnerability to the operation of compression algorithms.

Attacks of this type are called ‘Side-Channel Attacks’. One of the first HTTP-focused ‘Side-Channel’ attacks was named ‘CRIME’, and it was used to gather information from HTTP headers that were compressed and encrypted by the TLS protocol. This attack was first demonstrated in 2012 at the Ekoparty security conference, but it was not widely used, as only a small portion of the servers used TLS to compress HTTP requests.

When some browsers such as Google Chrome disabled TLS compression CRIME viability further diminished, however, the methodology of this attack served as the basis for another type of attack: BREACH, presented in 2013 at the Black Hat security conference. CRIME exploits a vulnerability in TLS compression to obtain request header information, BREACH can obtain information in the HTTPS response body when the server uses HTTP compression itself.

In Black Hat 2018 another attack that compromises the confidentiality of compressed data before encryption was presented; named VORACLE, this attack makes it possible to obtain data in VPN tunnels that use compression. For it to be viable, the victim must be using protocols that are inherently insecure, such as HTTP. This means that, if the victim visits, for example, a website that uses the HTTP protocol and the attacker can inject data into the VPN tunnel, the encrypted and compressed content can be inferred from the size of the data traffic through the VPN tunnel.

Compress vs. Encrypt: Ensuring Data Encryption

The use of encryption is essential in situations where there is very sensitive information traffic. However, in these situations, it may also be very desirable to compress the data for the benefits already listed.

Because using compression and encryption at the same time can compromise data confidentiality, it is increasingly common for systems to only compress parts that do not have sensitive information. In this sense, some of the most popular web servers, such as Nginx, allow for the setting of policies to specify which pages of a website should be compressed by the server when they are accessed.

However, in some cases, it is not possible to distinguish parts of the transmitted data. For example, in the VPN tunnel scenario mentioned in the previous section, OpenVPN, a provider of a very popular VPN solution, recommended that all VPN-level compression be disabled to mitigate the VORACLE attack. This OpenVPN recommendation took into consideration a study which demonstrated that the VPN-level compression is ineffective compared to the compression used in the upper layers of the OSI model. In addition, in many cases, much of the data to be transmitted has already been compressed by upper-layer or encrypted protocols, which also decreases the effectiveness of compression, as discussed above.

Thus, it is possible to observe that, in certain scenarios (such as OpenVPN), data compression should be disabled in order to ensure its confidentiality. In addition, institutions and regulations are increasingly considering almost all user data as sensitive information. Thus, even if a given system offers the ability to compress only part of the data being trafficked, such as Nginx, this feature cannot be used by many websites where user information is available on almost every page. Thus, for these sites not to be susceptible to attacks using Side-Channel compression, their functionality must be completely disabled.

Considering the scenarios and protocols described in this article, it is possible to observe that the common factor is the use of encryption after compression. Based on this principle, and considering the possibility of an attacker injecting malicious data even before compression and encryption processes, exploiting Side-Channel compression attacks becomes feasible. Therefore, even considering the increasing presence of data compression in system applications, its use in conjunction with encryption is not the best option.